- CLAUDE.md 생성 (볼트 운영 규칙, Karpathy LLM Wiki 10가지 규칙) - 나의 핵심 맥락.md 생성 (아키텍트 프로필, 세컨드 브레인 목적, 핵심 소스) - raw/ 구조 정립 (book/기존 설계원칙 보존, articles/repos/notes/ 추가) - wiki/ 초기화 (index.md, log.md, concepts/sources/patterns/ 폴더) - output/ 초기화 - LLMWiki/ 기존 프롬프트 패턴 파일 보존 Co-Authored-By: Claude Sonnet 4.6 <noreply@anthropic.com>

89 KiB

Propagation

Decades of programming experience have taken a toll on our collective imagination. We come from a culture of scarcity, where computation and memory were expensive, and concurrency was difficult to arrange and control. This is no longer true. But our languages, our algorithms, and our architectural ideas are based on those assumptions. Our languages are basically sequential and directional—even functional languages assume that computation is organized around values percolating up through expression trees. Multidirectional constraints are hard to express in functional languages.

Escaping the Von Neumann straitjacket

The propagator model of computation [99] provides one avenue of escape. The propagator model is built on the idea that the basic computational elements are propagators, autonomous independent machines interconnected by shared cells through which they communicate. Each propagator machine continuously examines the cells it is connected to, and adds information to some cells based on computations it can make from information it can get from others. Cells accumulate information and propagators produce information.

Since the propagator infrastructure is based on propagation of data through interconnected independent machines, propagator structures are better expressed as wiring diagrams than as expression trees. In such a system partial results are useful, even though they are not complete. For example, the usual way to

compute a square root is by successive refinement using Heron's method. In traditional programming, the result of a square root computation is not available to subsequent computations until the required error tolerance is achieved. By contrast, in an analog electrical circuit that performed the same function, the partial results could be used by the next stages as first approximations to their computations. This is not an analog/digital problem—it is organizational. In a propagator mechanism the partial results of a digital process can be made available without waiting for the final result.

Filling in details

This makes a natural computational structure for building powerful systems that fill in details. The structure is additive: new ways to contribute information can be included just by adding new parts to a network, whether simple propagators or entire subnetworks. For example, if an uncertain quantity is represented as a range, a new way of computing an upper bound can be included without disturbing any other part of the network.

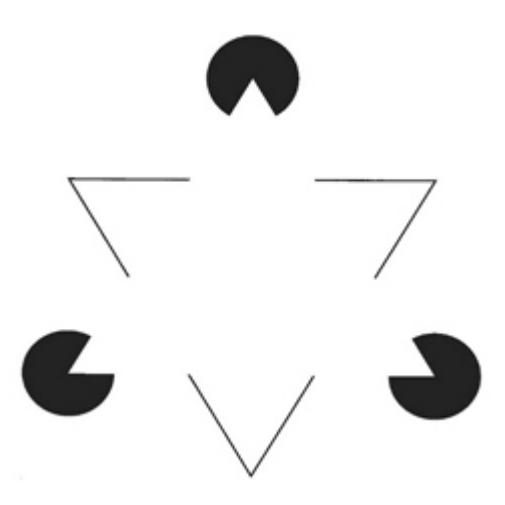

Filling in details plays an important role in all ways we use information. Consider Kanizsa's triangle (figure 7.1), for example. Given a few fragmentary pieces of evidence we see a white triangle (on a white background!) that isn't there (and that is typically described as brighter than the background). We have filled in the missing details of an implied figure. When we hear speech we fill in the details from the observed context, using the regularities of phonology, morphology, syntax, and semantics. An expert electrical circuit designer who sees a partial schematic diagram fills in details that make a sensible mechanism. This filling in of details is not sequential; it happens opportunistically wherever local deductions can be made from surrounding clues. Deductions may compound, so that if a piece is filled in it forms a new clue for the continuing completion process.

Figure 7.1 Kanizsa's triangle is a classic example of a completion illusion. The white triangle is not there!

Dependencies and backtracking

Using layering, we incorporate dependencies into the propagator infrastructure in a natural and efficient way. This allows the system to track and preserve information about the provenance of each value. Provenance can be used to provide a coherent explanation of how a value was derived, citing the sources and the rules by which the source material was combined. This is especially important when we have multiple sources, each providing partial information about a value. Dependency tracking also provides a substrate for debugging (and possibly for introspective self-debugging).

Besides foundation beliefs, hypotheticals may be introduced by amb machines, which provide alternative values supported by premises that may be discarded without pain. Unlike systems modeled on expression-based languages such as Lisp, there is no spurious control flow arising from the expression structure to pollute our dependencies and force expensive recomputation of already-computed values when backtracking.

Degeneracy, redundancy, and parallelism

The propagator model incorporates mechanisms to support the integration of redundant (actually degenerate) subsystems so that a problem can be addressed in multiple disparate ways. Multiply redundant designs can be effective in combating attacks: if there is no single thread of execution that can be subverted, an attack that disables or delays one of the paths will not impede the computation, because an alternate path can substitute. Redundant and degenerate parallel computations contribute to integrity and resiliency: computations that proceed along variant paths can be checked to assure integrity. The work of subverting parallel computations increases because of the cross-thread invariants.

The propagator model is essentially concurrent, distributed, and scalable, with strong isolation and a built-in assumption of parallel computation. Multiple independent propagators are computing and contributing to the information in the shared cells, where the information is merged and contradictions are noted and acted upon.

7.1 An example: Distances to stars

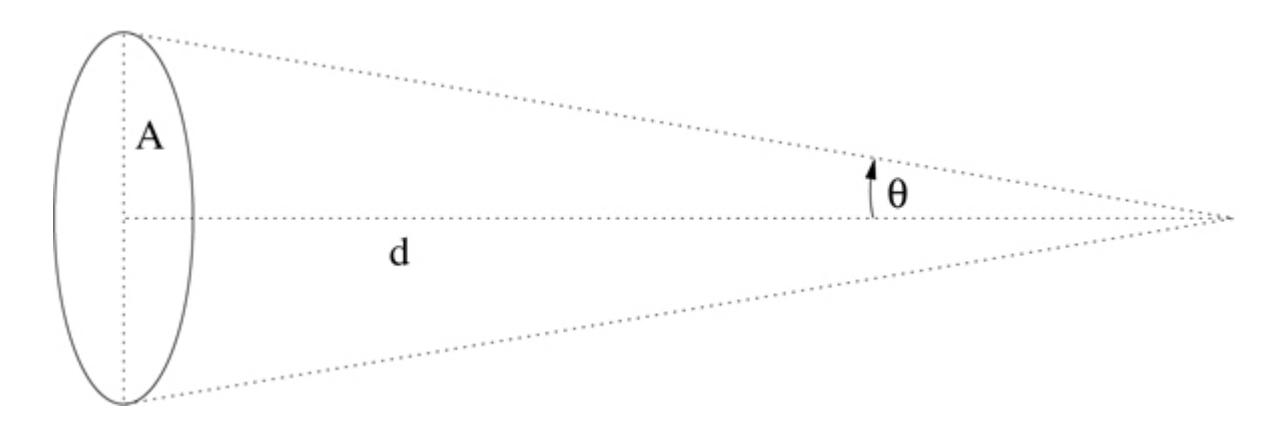

Consider a problem of astronomy, the estimation of the distance to stars. This is very hard, because the distances are enormous. Even for the closest stars, for which we can use parallax measurements, with the radius of the Earth's orbit as a baseline, the angular variation of the position of a star is a small part of an arcsecond. Indeed, the unit of distance for stellar distances is the parsec, which is the altitude of a triangle based on the diameter of the Earth's orbit, where the vertex angle is 2 arcseconds. The parallax is measured by observing the variation of position of the star against the background as the Earth revolves annually around the Sun. (See figure 7.2.)

Figure 7.2 The angle θ of the triangle to the distant star erected on the semimajor axis of the Earth's orbit around the Sun is called the parallax of the star. Note that A/d = tan(θ). If θ = 1 arcsecond then the distance d is defined to be 1 parsec. The length of the semimajor axis A is 1 Astronomical Unit (AU) = 149597870700 meters.

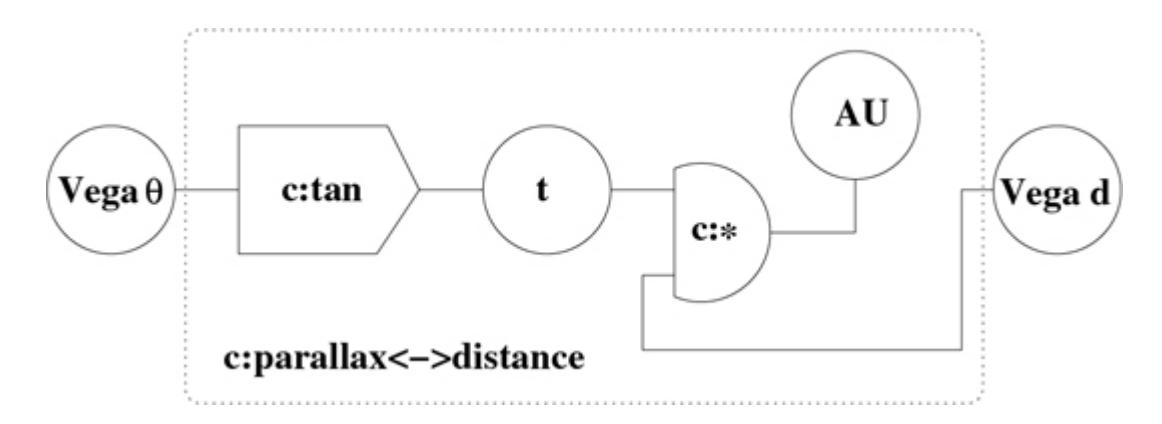

We define a propagator that relates the parallax of a star, in radians, to the distance to the star, in parsecs:

(define-c:prop (c:parallax<->distance parallax distance)

(let-cells (t (AU AU-in-parsecs))

(c:tan parallax t)

(c:* t distance AU)))

Here, the special form define-c:prop defines a special kind of procedure, a constructor named c:parallax<->distance. When c:parallax<->distance is given two cells, locally named parallax and distance, as its arguments, it constructs a constraint propagator that relates those cells. Using the special form letcells it creates two new cells, one locally named t, and the other locally named AU. The cell named t is not initialized; the cell named AU is initialized to the numerical value of the Astronomical Unit, the semimajor axis of the Earth's orbit, in parsecs. The cell named parallax and the cell named t are connected by a primitive constraint propagator constructed by c:tan, imposing the constraint that any value held by t must be the tangent of the value held by parallax. Similarly, the cells named t, distance, and AU are connected by a primitive constraint propagator constructed by c:*, imposing the constraint that the product of the value in cell t and the value in cell distance is the value in AU.

Let's think about the distance to the star Vega, as measured by parallax. We make two cells, Vega-parallax-distance for the distance, and Vega-parallax for the parallax angle:

(define-cell Vega-parallax-distance)

(define-cell Vega-parallax)

Now we can interconnect our cells with the propagator constructor that we just defined:

(c:parallax<->distance Vega-parallax Vega-parallax-distance)

The system of cells and propagators so constructed is illustrated in figure 7.3.

Figure 7.3 Here we see a "wiring diagram" of the propagator system constructed by calling c:parallax<->distance on the cells named Vega-parallax-distance (Vega d in the diagram) and Vega-parallax (Vega θ in the diagram). Circles indicate cells, and other shapes indicate propagators interconnecting the cells. These propagators are not directional—they enforce algebraic constraints. By convention we name constraint-propagator constructors with the prefix c:. For example, the propagator constructed by c:* enforces the constraint that the product of the contents of the cell t and the contents of the cell Vega-parallaxdistance is the contents of the cell AU.

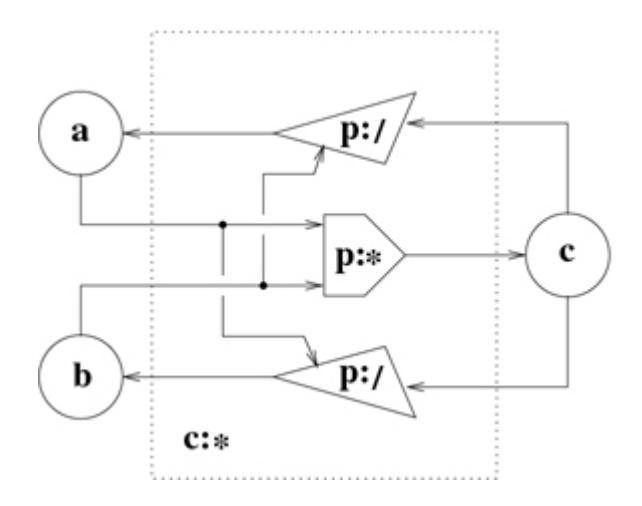

The constraint propagators are themselves made up of directional propagators, as shown in figure 7.4. A directional propagator, such as the multiplier constructed by p:*, adjusts the value in the product cell to be consistent with the values in the multiplier and multiplicand cells. It is entirely appropriate to mix directional propagators and constraint propagators in a propagator system. 1

Now let's use this small system to compute. Friedrich G. W. von Struve in 1837 published an estimate of the parallax of Vega: 0*.125" ± 0.*05". 2 This was the first plausible published measurement of the parallax of a star, but because his data was sparse and he later contradicted that data, the credit for the first real measurement goes to Friedrich Wilhelm Bessel, who did a careful measurement of the parallax of the star 61 Cygni in 1838. However, Struve's estimate is quite close to the current best estimate of the parallax of Vega. We tell our propagator system Struve's estimate of 125 milliarcseconds plus or minus 50 milliarcseconds:

Figure 7.4 The constraint propagator constructed by c:* is made up of three directional propagators. By convention we name the directional-propagator constructors with the prefix p:. The directional multiplier propagator, constructed by p:*, forces the value in c to be the product of the values in cells a and b. The divider propagators, constructed by p:/, force the value in their quotient cells (a and b) to be the result of dividing the value in the dividend cell (c) by the value in the divisor cells (b and a).

(tell! Vega-parallax

(+->interval (mas->radians 125) (mas->radians 50))

'FGWvonStruve1837)

The procedure tell! takes three arguments: a propagator cell, a value for that cell, and a premise symbol describing the provenance of the data. The procedure mas->radians converts milliarcseconds

to radians. The procedure +->interval makes an interval centered on its first argument:

(define (+->interval value delta)

(make-interval (n:- value delta) (n:+ value delta)))

So the Vega-parallax cell is given the interval

(+->interval (mas->radians 125) (mas->radians 50))

(interval 3.6361026083215196e-7 8.48423941941688e-7)

Struve's estimate of the error in his result was a pretty big fraction of the estimated parallax. So his estimate for the distance to Vega is pretty wide (roughly 5*.7 to 13.3 or 9.5 ± 3.*8 parsecs):

(get-value-in Vega-parallax-distance)

(interval 5.7142857143291135 13.33333333343721)

(interval>+- (get-value-in Vega-parallax-distance))

(+- 9.523809523883163 3.8095238095540473)

This interval value is supported by the premise FGWvonStruve1837.

(get-premises Vega-parallax-distance)

(support-set fgwvonstruve1837)

We will use a procedure inquire that nicely shows the value of the cell and the support for that value: 3

(inquire Vega-parallax-distance)

((vega-parallax-distance)

(has-value (interval 5.7143e0 1.3333e1))

(depends-on fgwvonstruve1837)

(because

((p:/ c:* c:parallax<->distance)

(au 4.8481e-6)

(t (interval 3.6361e-7 8.4842e-7)))))

A tighter bound, reported by Russell et al. in 1982 [106], is

(tell! Vega-parallax

(+->interval (mas->radians 124.3) (mas->radians 4.9))

'JRussell-etal1982)

which seems pretty close to the center of Struve's estimate. With that measurement, the distance estimate is narrowed to

(inquire Vega-parallax-distance)

((vega-parallax-distance)

(has-value (interval 7.7399 8.3752))

(depends-on jrussell-etal1982))

Notice that our estimate of the distance to Vega now depends only on the Russell measurement. Because the interval of the Russell measurement is entirely contained in the interval of the Struve measurement, the Struve measurement provides no further information. But the cell remembers the Struve measurement and its provenance so it can be recovered, if needed.

By 1995 there were some better measurements: 4

(tell! Vega-parallax

(+->interval (mas->radians 131) (mas->radians 0.77))

'Gatewood-deJonge1995)

((vega-parallax)

(has-value (the-contradiction))

(depends-on jrussell-etal1982 gatewood-dejonge1995)

(because

((has-value (interval 5.7887e-7 6.2638e-7))

(depends-on jrussell-etal1982))

((has-value (interval 6.3137e-7 6.3884e-7))

(depends-on gatewood-dejonge1995))))

We see that the contradiction depends on the two sources of information. Each source provides an interval, and the intervals do not overlap. Suppose we think that the measurement by Gatewood and de Jonge looks suspicious. Let's retract that premise:

(retract! 'Gatewood-deJonge1995)

All values that depend on the retracted premise are now retracted, and thus the value that we see for the distance has reverted to

(inquire Vega-parallax-distance)

((vega-parallax-distance)

(has-value (interval 7.7399 8.3752))

(depends-on jrussell-etal1982))

This is what we got from Russell et al.; and indeed that premise supports the value.

But the plot thickens, because the Hipparcos satellite (as reported by Van Leeuwen [83]) made some very impressive measurements of the parallax of Vega:

(tell! Vega-parallax

(+->interval (mas->radians 130.23) (mas->radians

0.36))

'FvanLeeuwen2007Nov)

((vega-parallax)

(has-value (the-contradiction))

(depends-on jrussell-etal1982 fvanleeuwen2007nov)

(because

((has-value (interval 5.7887e-7 6.2638e-7))

(depends-on jrussell-etal1982))

((has-value (interval 6.2963e-7 6.3312e-7))

(depends-on fvanleeuwen2007nov))))

Which do we believe? 5 Let's reject the Russell result:

(retract! 'JRussell-etal1982)

(inquire Vega-parallax-distance)

((vega-parallax-distance)

(has-value (interval 7.6576 7.7))

(depends-on fvanleeuwen2007nov))

Here we have the satellite's result isolated. Now let's add back Gatewood and see what happens:

(assert! 'Gatewood-deJonge1995)

(inquire Vega-parallax-distance)

((vega-parallax-distance)

(has-value (interval 7.6576 7.6787))

(depends-on gatewood-dejonge1995 fvanleeuwen2007nov))

We get a stronger result because the intersection of the intervals of Van Leeuwen and Gatewood is smaller than either separately. 6 (The Gatewood result, (interval 7.589 7.6787), is not shown.)

Magnitudes

There are other ways to estimate the distance to a star. We know that the apparent brightness of a star decreases with the square of the distance from us, so if we knew the intrinsic brightness of the star we could get the distance by measuring its apparent brightness.

By now we have a pretty good theoretical understanding that can give reliable and accurate estimates of the intrinsic brightness of some kinds of stars. For those stars, spectroscopic analysis of the light we receive from the star gives us information about, for example, its state, its chemical composition, and its mass; and from these we can estimate the intrinsic brightness. Vega is a very good example of a star we know a lot about.

Astronomers describe the brightness of a star in magnitudes. A difference of 5 magnitudes is defined to be a factor of 100 in brightness. 7 The intrinsic brightness of a star is given as the magnitude it would appear to have if it were situated 10 parsecs away from the observer. This is called the absolute magnitude of the star. We can summarize the connection between brightness and distance in a neat formula that combines the inverse square law with the definition of magnitudes. If M is the absolute magnitude of a star, m is its apparent magnitude, and d is the distance to the star in parsecs, then m − M = 5(log10(d) − 1). This formula can be represented by a constraint-propagator constructor: 8

(define-c:prop

(c:magnitudes<->distance apparent-magnitude

absolute-magnitude

magnitude-distance)

(let-cells (dmod dmod/5 ld10 ld

(ln10 (log 10)) (one 1) (five 5))

(c:+ absolute-magnitude dmod apparent-magnitude)

(c:* five dmod/5 dmod)

(c:+ one dmod/5 ld10)

(c:* ln10 ld10 ld)

(c:exp ld magnitude-distance)))

Now let's wire up some knowledge of Vega. We define some cells and interconnect them with the propagators:

(define-cell Vega-apparent-magnitude)

(define-cell Vega-absolute-magnitude)

(define-cell Vega-magnitude-distance)

(c:magnitudes<->distance Vega-apparent-magnitude

Vega-absolute-magnitude

Vega-magnitude-distance)

We now provide some measurements. Vega is very bright: its apparent magnitude is very close to zero. (The Hubble space telescope was used to make this very precise measurement. See Bohlin and Gilliland [14].)

(tell! Vega-apparent-magnitude

(+->interval 0.026 0.008)

'Bohlin-Gilliland2004)

And the absolute magnitude of Vega is also known to rather high precision [44]:

(tell! Vega-absolute-magnitude

(+->interval 0.582 0.014)

'Gatewood2008)

As a consequence we get a pretty nice estimate of the distance to Vega, which depends only on these measurements:

(inquire Vega-magnitude-distance)

((vega-magnitude-distance)

(has-value (interval 7.663 7.8199))

(depends-on gatewood2008 bohlingilliland2004))

Unfortunately, we have the distance in two different cells, so let's connect them with a propagator:

(c:same Vega-magnitude-distance Vega-parallax-distance)

At this point we have an even better value for the distance to Vega—an interval whose high end is the same as before (on page 336), but whose low end is a bit higher:

(inquire Vega-parallax-distance)

((vega-parallax-distance)

(has-value (interval 7.663 7.6787))

(depends-on fvanleeuwen2007nov gatewood-dejonge1995

gatewood2008 bohlingilliland2004))

Does the 1995 measurement of Gatewood and de Jonge really matter here? Let's find out:

(retract! 'Gatewood-deJonge1995)

(inquire Vega-parallax-distance)

((vega-parallax-distance)

(has-value (interval 7.663 7.7))

(depends-on fvanleeuwen2007nov

gatewood2008

bohlingilliland2004))

Indeed it does. The 1995 measurement pulled in the high end of the interval.

Measurements Improved!

We have two ways of computing the distance to Vega—from parallax and from magnitude. Here is something remarkable: the parallax and magnitude measurement intervals are each improved using the information coming from the other. This is required in order for the system to be consistent.

Look at the apparent magnitude of Vega. The original measurement supplied from Bohlin and Gilliland was m = 0*.026 ± 0.*008. This translates to the interval

(+->interval 0.026 0.008)

(interval .018 .034)

But now the value is a bit better—[0*.018,* 0*.*028456]:

(inquire Vega-apparent-magnitude)

((vega-apparent-magnitude)

(has-value (interval 1.8e-2 2.8456e-2))

(depends-on gatewood2008

fvanleeuwen2007nov

bohlin-gilliland2004))

The high end had to be pulled in to be consistent with the information from the parallax measurements. This is true for each measurable quantity. The absolute magnitude supplied by Gatewood 2008 (page 338) was:

(+->interval 0.582 0.014)

(interval .568 .596)

But now the low end is pulled in:

(inquire Vega-absolute-magnitude)

((vega-absolute-magnitude)

(has-value (interval 5.8554e-1 5.96e-1))

(depends-on gatewood2008

fvanleeuwen2007nov

bohlin-gilliland2004))

The parallax is also improved by information from the magnitude measurements:

(inquire Vega-parallax)

((vega-parallax)

(has-value (interval 6.2963e-7 6.3267e-7))

(depends-on fvanleeuwen2007nov

gatewood2008

bohlin-gilliland2004))

The fact that the computation propagates in all directions gives us a powerful tool for understanding the implications of any new information.

Exercise 7.1: Making writing propagator networks easier

In our propagator system it is pretty painful to write the code to build even a simple network, because all internal nodes must be named. For example, a constraint propagator that converts between Celsius and Fahrenheit temperatures looks like:

(define-c:prop (celsius fahrenheit)

(let-cells (u v (nine 9) (five 5) (thirty-two 32))

(c:* celsius nine u)

(c:* v five u)

(c:+ v thirty-two fahrenheit)))

It would be much nicer to be able to use expression syntax for some propagators, so we could write:

(define-c:prop (celsius fahrenheit)

(c:+ (ce:* (ce:/ (constant 9) (constant 5))

celsius)

(constant thirty-two)

fahrenheit))

Here ce:* and ce:+ are propagator constructors that create the cell for the value and return it to their caller. The procedure ce:+ could be written:

(define (ce:+ x y)

(let-cells (sum)

(c:+ x y sum)

sum))

Besides constraint propagators, there are also directional propagators such as p:+. A nice name for the expression form of this is pe:+.

We have access to the names of all of the primitive arithmetic operators. Write a program that takes these names and installs both directional- and constraint-expression forms for each operator.

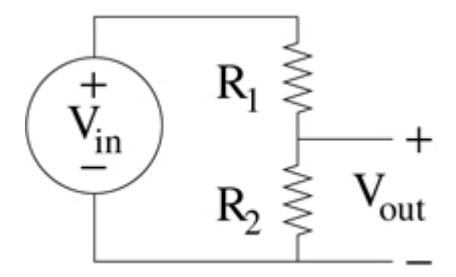

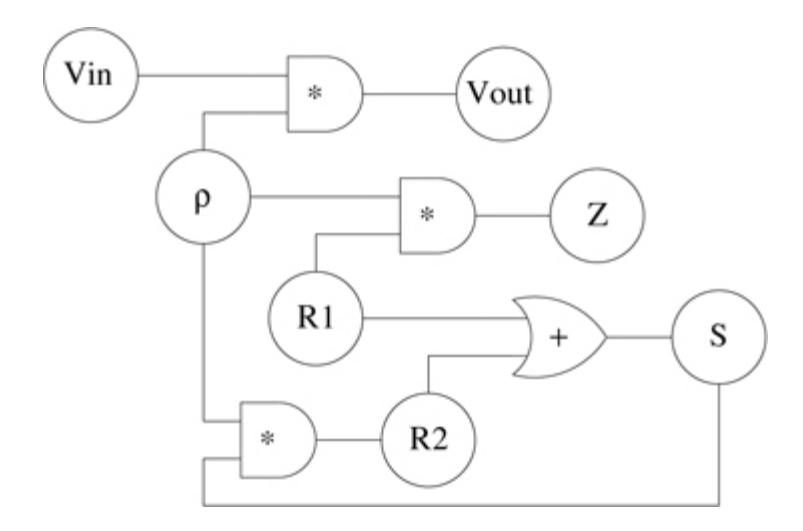

Exercise 7.2: An electrical design problem

Note: You don't need to know electronics to do this problem. Anna Logue is designing a transistor amplifier. As part of her plan she needs to make a voltage divider to bias a transistor. The voltage divider is made of two resistors, with resistance values R1 and R2 . ρ is the ratio of output voltage Vout to power-supply voltage Vin . There is also Z, the output resistance of the divider.

Here are the relevant equations:

\rho = \frac{V_{out}}{V_{in}}

\rho = \frac{R_2}{R_1 + R_2}

Z = R_1 \rho

Since Anna has many problems like this to solve, she makes a constraint network to help her:

- a. Make a propagator network that implements this diagram.

- b. Anna has a power supply with a voltage between 14.5 and 15.5 volts, and she needs the output of the voltage divider to be

between 3.5 and 4.0 volts: Vin ∈ [14*.5, 15.5] and Vout ∈ [3.5, 4.*0].

She has in stock a 47000-ohm resistor for R2 . What is the range of values from which she can select R1? Can she choose a value for R1 that satisfies her specification?

c. Anna also needs the output resistance of the divider to be between 20000 and 30000 ohms: Z ∈ [20000*,* 30000].

So her real problem is to find appropriate ranges of values for the voltage-divider resistors R1 and R2 given the division ratio ρ required and the specification of Z.

If instead of choosing R2 (remember to retract the support for this value!) she chooses to assert the Z specification, this should determine R1 and R2 ; but the network will not find the value of R2 ! Why? Explain this problem.

d. If we now tell R2 that it is somewhere in the range of 1000 ohms to 500000 ohms, the propagator network will converge to give a useful answer for the real range of R2 . Why? Explain this!

Exercise 7.3: Local consistency—a project

Propagation is a way of attacking local consistency problems. For example, the Waltz algorithm [125] is a propagation method for interpreting line drawings of solid polyhedra. Map coloring and similar problems can be successfully attacked using propagation.

The essential idea is that there is a graph with nodes that can be assigned one of a set of discrete labels, and that the nodes are interconnected by constraints that limit which labels are allowed based on the labels in neighboring nodes. For example, in the Waltz algorithm a line may have one of several labels. Each line connects two vertices. A vertex constrains the lines that terminate on it to be consistent with one of a set of possible geometric interpretations of the vertex. But the interpretation of a line must be the same at both ends of the line.

- a. For these experiments you will need an "arithmetic" of discrete sets. You will need unions, intersections, and the complement of one set in another. Build such an arithmetic.

- b. The set of possibilities for a node is partial information about the actual status of the node: the smaller the set of possibilities the more information we have about the node. If we represent the knowledge about the status of a node as a propagator cell, the merger of two sets is their intersection. This is consistent with the intersection of intervals for ranges of real values. Make intersection of discrete sets a handler for generic merge.

- c. Build and demonstrate your solution to a local consistency problem using this organization.

- d. Notice that in many graphs the assignment of a node depends only on a few of the constraints. Show how to use support tracking to give explanations for the assignment of a node.

7.2 The propagation mechanism

The essential propagation machinery consists of cells, propagators, and a scheduler. A cell accumulates information about a value. It must be able to say what information it has, and it must be able to accept updates to that information. It also must be able to alert propagators that are interested in its contents about changes to its contents. Each cell maintains a set of propagators that may be interested in its contents; these are called neighbors.

A propagator is a stateless (functional) procedure that is activated by changes in the value of any cell it is interested in. Cells that may activate a propagator are its input cells. An activated propagator gathers information from its input cells and may compute an update for one or more output cells. A cell may be both an input and an output for a propagator.

The content of a cell is the information it has accumulated about its value. When asked for its value, for example by a propagator, it

responds with the strongest value it can provide. We have seen this in the use of intervals—a cell reports the tightest possible interval it knows about for its value. When a cell receives input it determines if the change in its contents makes a change in its strongest value. If the strongest value changes, the cell alerts its neighbors. This tells the scheduler to activate them. The scheduler is responsible for allocating computational resources to the activated propagators. It is intended that the computational result of propagation is independent of the details or order of scheduling.

Cells and propagators are elements organized in a hierarchy. Each cell or propagator has a name, a parent, and perhaps a set of children. These are used to construct unique path names for each cell or propagator in the hierarchy. The path name can be used to access the element and to identify it in printed output. A cell or propagator is made either by the user or by a compound propagator. The parameter *my-parent* is dynamically bound by the parent. This allows the new cell or propagator to attach itself to the family.

7.2.1 Cells

A cell is implemented as a message-accepting procedure, using the bundle macro. The cell maintains its information in the content variable, which is initialized to a value the-nothing (identified by the predicate nothing?) that represents the absence of any information about the value. The value that the cell reports, when asked, is the strongest value that it has at the moment. The cell also maintains a list of its neighbors, the propagators that need to be alerted when the strongest value of the cell changes. An auxiliary data structure relations is used to hold the cell's family relations.

Here is an outline of the constructor for cells. The interesting parts are add-content! and test-content!, explained below.

(define (make-cell name)

(let ((relations (make-relations name (*my-parent*)))

(neighbors '())

(content the-nothing)

(strongest the-nothing))

(define (get-relations) relations)

(define (get-neighbors) neighbors)

(define (get-content) content)

(define (get-strongest) strongest)

(define (add-neighbor! neighbor)

(set! neighbors (lset-adjoin eq? neighbors neighbor)))

(define (add-content! increment)

(set! content (cell-merge content increment))

(test-content!))

(define (test-content!)

See definition on page 345.)

(define me

(bundle cell? get-relations get-neighbors

get-content get-strongest add-neighbor!

add-content! test-content!))

(add-child! me (*my-parent*))

(set! *all-cells* (cons me *all-cells*))

me))

A cell receives new information through a call to add-content!. The new information, increment, must be merged with the existing information in content. In general, the merging process is specific to the kind of information being merged, so the merging mechanism for the cell must be specified. However, the-nothing, which represents the absence of information, is special. Any information merged with the-nothing is returned unchanged.

The reason for merging rather than replacement is to use partial information to refine our knowledge of the value. 9 For example, in the computation of stellar distances described above, intervals are merged to produce better estimates by intersection. In the typeinference example (see section 4.4.2) we combined descriptions by unification to get more specific information. We will examine the general problem of merging values in section 7.4.

In some cases it may not be possible to merge two pieces of information. For example, the value of an unknown number cannot be both zero and one. In this case cell-merge returns a contradiction object, which may carry information about the details of the conflict. If there is no extra information to be had, the contradiction object is the symbol the-contradiction, which satisfies the primitive predicate contradiction?. More complex contradictions are detected by the generic predicate procedure

general-contradiction?. Contradictions are resolved, if possible, by handle-cell-contradiction, as explained in section 7.5.

If the cell's strongest value changes, the neighbors are alerted. But if an increment does not affect the strongest value, it provides no additional information; in that case it is important to avoid alerting the neighbors, to prevent useless loops. All this is implemented by the test-content! procedure, which is defined as an internal procedure in make-cell.

(define (test-content!)

(let ((strongest* (strongest-value content)))

(cond ((equivalent? strongest strongest*)

(set! strongest strongest*)

'content-unchanged)

((general-contradiction? strongest*)

(set! strongest strongest*)

(handle-cell-contradiction me)

'contradiction)

(else

(set! strongest strongest*)

(alert-propagators! neighbors)

'content-changed))))

The procedure test-content! is also used to alert all cells when a premise changes its belief status. Each alerted cell checks if its strongest value has changed, requiring some action, like signaling a contradiction or alerting its propagator neighbors. See section 7.3.

To hide the implementation details of a cell we provide convenient access procedures:

(define (add-cell-neighbor! cell neighbor)

(cell 'add-neighbor! neighbor))

(define (add-cell-content! cell increment)

(parameterize ((current-reason-source cell))

(cell 'add-content! increment)))

(define (cell-strongest cell)

(cell 'get-strongest))

The current-reason-source parameter in add-cell-content! is part of the layer that gives a reason for every value, as described in

footnote 3 on page 333. This useful feature will not be further elaborated here.

7.2.2 Propagators

To make a propagator we supply a list of input cells, a list of output cells, and a procedure activate! to execute when alerted. The constructor introduces the propagator to its input cells with addcell-neighbor!. It also alerts the new propagator so that it will be run if needed.

(define (propagator inputs outputs activate! name)

(let ((relations (make-relations name (*my-parent*))))

(define (get-inputs) inputs)

(define (get-outputs) outputs)

(define (get-relations) relations)

(define me

(bundle propagator? activate!

get-inputs get-outputs get-relations))

(add-child! me (*my-parent*))

(for-each (lambda (cell)

(add-cell-neighbor! cell me))

inputs)

(alert-propagator! me)

me))

Primitive propagators are directional in that their outputs do not overlap with their inputs. We make primitive propagators from Scheme procedures that produce a single output. By convention, we build a primitive propagator by passing the input cells and the output cell together, with the output last. We could make a primitive propagator that produced several outputs, such as integer divide with remainder, but we do not need this here.

(define (primitive-propagator f name)

(lambda cells

(let ((output (car (last-pair cells)))

(inputs (except-last-pair cells)))

(propagator inputs (list output)

(lambda ()

(let ((input-values (map cell-strongest inputs)))

(if (any unusable-value? input-values)

'do-nothing

(add-cell-content! output

(apply f input-values)))))

name))))

When activated, a propagator may choose to compute a result using f. The result of calling f on the input values is added to the output cell. We call this choice process the activation policy. Here we require all inputs to be usable values. By default, contradiction objects and the-nothing are unusable, though we may add others later. Other policies are possible.

Propagators may be constructed by combining other propagators. We make compound propagators by supplying a procedure tobuild that builds the desired network from parts. A compound propagator is not built until it is needed to make a computation. But that need arises only when data arrives at one or more of its input cells to activate it. However, we do not want to rebuild the compound propagator network every time it gets new values in its input cells, so the constructor must make sure that it is built only once. This is arranged with a boolean flag built? that is set when the build is done.

(define (compound-propagator inputs outputs to-build name)

(let ((built? #f))

(define (maybe-build)

(if (or built?

(and (not (null? inputs))

(every unusable-value?

(map cell-strongest inputs))))

'do-nothing

(begin (parameterize ((*my-parent* me))

(to-build))

(set! built? #t)

'built)))

(define me

(propagator inputs outputs maybe-build name))

me))

The activation policy for a compound propagator is different from the activation policy for a primitive propagator. Here we build the network if any input has a usable value. This is appropriate because

some part of the network may do some useful computation even if not all of the inputs are available.

The parameterize machinery is in support of the hierarchical organization of the propagator elements. It makes the compound propagator the parent of any cells or propagators that are constructed in the building of the network.

As described in figure 7.4 on page 332, constraint propagators are constructed by combining directional propagators. For example, we can make the propagator that enforces the constraint that the product of the values in two cells is the value in the third as follows:

(define-c:prop (c:* x y product)

(p:* x y product)

(p:/ product x y)

(p:/ product y x))

Here we see that three directional propagators are combined to make the constraint. This can work because we merge values rather than replacing them, and equivalent values do not propagate. If equivalent values propagated, anything like the c:* propagator would be an infinite loop. 10

The macro define-c:prop is just syntactic sugar. The actual code produced by the macro is:

(define (c:* x y product)

(constraint-propagator

(list x y product)

(lambda ()

(p:* x y product)

(p:/ product x y)

(p:/ product y x))

'c:*))

where constraint-propagator is just:

(define (constraint-propagator cells to-build name)

(compound-propagator cells cells to-build name))

All the cells associated with a constraint propagator are both input and output cells.

7.3 Multiple alternative world views

In our stellar distances example we showed that each value carried the support set of premises used in its computation, and also the "reason" for the value (the propagator that made it and the values that it was made from). This was done using the layered-data mechanism we introduced in section 6.4. But some "facts" are mutually inconsistent. In our example we modulated the belief in the premises to obtain locally consistent world views, depending on which premises we chose to believe.

A premise is either in (believed) or out (not believed). The user in our example could assert! a premise to bring it in or retract! it to kick it out. The "magic" in the system is that the observable values in cells are always those that are fully supported—those for which the supporting premises are all in—even as the beliefs in the premises are changed. 11

It is silly to recompute all of the values as the belief status of the support changes. We can do better by remembering values that are not currently fully supported. This allows us to reassert a premise, and recover the values that it supports without recomputing those values. When the state of belief in a premise changes, cells must check if their strongest value has changed. This is accomplished by calling the test-content! for every cell; each cell whose strongest value changes alerts the propagators that depend on that cell's value. Each of those propagators then gets the strongest values of the contents of its input cells and computes (or recomputes!) its output value. If that output value is equivalent to the strongest value already stored in the output cell, there will be no further action. If the belief status of the strongest value in the output cell changes, this will cause its neighboring propagators to recompute. But the strongest value in the output cell may have independent support, in which case the propagation will stop there.

To make this work, in each cell the content may hold a set of values (the value set) paired with the premises they depend upon.

The cell extracts the strongest-value from the content and keeps it in the local variable strongest, which can be accessed using cell-strongest. The strongest value is the best choice of the fully supported values in the set, 12 or the-nothing if none of the values in the set are fully supported.

It remains to elucidate strongest-value, which must be able to operate on raw data, on layered data, and on value sets. Thus it is appropriate to make it a generic procedure. The strongest value of an unannotated data item is just that data item, so this provides the default.

(define strongest-value

(simple-generic-procedure 'strongest-value 1

(lambda (object) object)))

If a layered data item is fully supported, then its strongest value is itself, otherwise its strongest value is no information.

(define-generic-procedure-handler strongest-value

(match-args layered-datum?)

(lambda (elt)

(if (all-premises-in? (support-layer-value elt))

elt

the-nothing)))

The strongest value of a value set is the strongest consequence of the set:

(define-generic-procedure-handler strongest-value

(match-args value-set?)

(lambda (set) (strongest-consequence set)))

The procedure strongest-consequence just merges together the elements of a value set that are fully supported. It uses mergelayered to determine the "best choice" of the fully supported values in the value set (see section 7.4.2). If there are no fully supported values there is no information, so the result is thenothing.

(define (strongest-consequence set)

(fold (lambda (increment content)

(merge-layered content increment))

the-nothing

(filter (lambda (elt)

(all-premises-in?

(support-layer-value elt)))

(value-set-elements set))))

7.4 Merging values

We have not addressed what it means to merge values. This is a complicated process, with three parts: merging base values, such as numbers and intervals; merging supported values; and merging value sets. The procedure cell-merge in add-content! must be assigned to an appropriate merger for the data being propagated. On page 366, setup-propagator-system initializes cell-merge to merge-value-sets.

7.4.1 Merging base values

There are only a few base value types in our example propagator system: the-nothing, the-contradiction, numbers, booleans, and intervals. Numbers and booleans are simple in that only equivalent values can be merged. If they cannot be merged it is a contradiction. Anything merged with the-nothing is itself. Anything merged with the-contradiction is the-contradiction. The merge procedure is generic for base values, and the default handler deals with all the simple cases—all except intervals.

(define merge

(simple-generic-procedure 'merge 2

(lambda (content increment)

(cond ((nothing? content) increment)

((nothing? increment) content)

((contradiction? content) content)

((contradiction? increment) increment)

((equivalent? content increment) content)

(else the-contradiction)))))

In the astronomy example we also have interval arithmetic, so we need to be able to merge intervals:

(define (merge-intervals content increment)

(let ((new-range (intersect-intervals content increment)))

(cond ((interval=? new-range content) content)

((interval=? new-range increment) increment)

((empty-interval? new-range) the-contradiction)

(else new-range))))

We can merge a number with an interval. We get the number if it is contained in the interval, otherwise it is a contradiction:

(define (merge-interval-real int x)

(if (within-interval? x int)

x

the-contradiction))

This all gets glued together as a generic procedure handler:

(define-generic-procedure-handler merge

(any-arg 2 interval? real?)

(lambda (x y)

(cond ((not (interval? x)) (merge-interval-real y x))

((not (interval? y)) (merge-interval-real x y))

(else (merge-intervals x y)))))

There are no other cases of base value merges.

7.4.2 Merging supported values

A supported value is implemented as a layered data item that has a support layer and the base value being propagated. So the merger for supported values must be a layered procedure:

(define merge-layered

(make-layered-procedure 'merge 2 merge))

The support layer implements merge with support:merge, which is given three arguments: the merged value computed by the base layer, the current content, and the new increment. The job of support:merge is to deliver the support set appropriate for the merged value. If the merged value is the same as the value from the

content or the value from the increment, we can use that argument's support. But if the merged value is different, we need to combine the supports.

(define (support:merge merged-value content increment)

(cond ((equivalent? merged-value

(base-layer-value content))

(support-layer-value content))

((equivalent? merged-value

(base-layer-value increment))

(support-layer-value increment))

(else

(support-set-union

(support-layer-value content)

(support-layer-value increment)))))

(define-layered-procedure-handler merge-layered support-layer

support:merge)

Here define-layered-procedure-handler is used to attach the procedure support:merge to the layered procedure merge-layered as its support-layer handler.

7.4.3 Merging value sets

To merge value sets, we just add the elements of the increment to the content to make a new set. Note that ->value-set coerces its argument to a value set.

(define (merge-value-sets content increment)

(if (nothing? increment)

(->value-set content)

(value-set-adjoin (->value-set content) increment)))

When adjoining a new element to the content, we do not add the element if it is subsumed by any existing content element.

(define (value-set-adjoin set elt)

(if (any (lambda (old-elt)

(element-subsumes? old-elt elt))

(value-set-elements set))

set

(make-value-set

(lset-adjoin equivalent?

(value-set-elements set)

elt))))

The criteria for subsumption are a bit complicated. One element subsumes another if its base value is at least as informative as the other's base value and if its support is a subset of the other's. (Note: A smaller support set is a stronger support set, because it depends on fewer premises.)

(define (element-subsumes? elt1 elt2)

(and (value-implies? (base-layer-value elt1)

(base-layer-value elt2))

(support-set<= (support-layer-value elt1)

(support-layer-value elt2))))

The procedure value-implies? is a generic procedure, because it must be able to work with many kinds of base data, including intervals.

Exercise 7.4: Merging with unification

We have seen how intervals that partially specify a numerical value can be merged to get more specific information about that value. Another kind of partial information is symbolic patterns, with holes for missing information. This kind of information can be merged using unification, as described in section 4.4. We used unification to implement a simple version of type inference, but it can be used more generally for combining partially specified symbolic expressions. The example of combining records about Ben Franklin in section 4.4 may be suggestive. One way to think about organizing a propagator system is that each cell is a small database restricted to information about some particular thing. The propagators interconnecting cells are ways that deductions can be made. For example, one promising domain is the classification of topological spaces in point-set topology. Another is the organization of your living group—for example, the adjacency relationships of rooms and the social relationships of the inhabitants. Pick a domain that you find interesting. Use your imagination!

- a. Design a propagator network where each cell will hold some particular kind of symbolic information. For example, a cell may represent what is known about a student at MIT. The information may be name, address, telephone number, class year, major, birthday, best friends... This requires designing an extensible data structure that can hold this information and more. You will also need propagators that relate the people. So you may get information from one person, or from multiple people, about another. This may be a nice model of gossip. Make some primitive propagators that manipulate these symbolic quantities and wire up an interesting network.

- b. Add unification as a generic procedure handler for merge, and show how it can be used to combine partial symbolic information coming in from multiple sources.

- c. Discover some interesting compound symbolic propagators that can be used to represent the common combinations of connections of related subjects in your network.

7.5 Searching possible worlds

It would be nice if search were unnecessary. Unfortunately, for many kinds of real problems it is helpful to "assume for the sake of argument" something that may not be true. We then work out the consequences of that assumption. If the assumption leads to a contradiction, we retract it and try something else. But in any case, the assumption may enable other deductions that help solve the problem.

We started to explore this idea in section 5.4, where we introduced amb and used it in search problems. In those adventures with amb we were working in an expression-oriented language with an order of execution that was constrained by the way expressions are evaluated. We partly extracted ourselves from that constraint with the painful use of continuations, either structuring the

evaluator to explicitly pass around continuation procedures (in section 5.4.2), or using Scheme's implicit continuations via call/cc (in section 5.5.3). But even with call/cc we do not have sufficient control of the search process.

In section 6.4 we showed how to associate each value with a support set, the set of premises that the value depends on. If each assumption is labeled with a new premise, we can know exactly the combination of assumptions that led to a contradiction. If we are clever, we can avoid asserting that combination of assumptions later in the search. But in the evaluation of expressions it is hard to isolate the assertion of assumptions from the flow of control.

The problem is that in an expression language, the choice decisions are made as expressions are evaluated, producing a branching decision tree. The decision tree is evaluated in some order, for example, depth first or breadth first. The consequences of any sequence of decisions are evaluated after the decisions are made. If a failure is encountered (a contradiction is noted), only the decisions on the evolved branch are possible culprits. But if only some of the decisions on the branch are at fault, there may be some innocent ones that were made later than the last culprit. Computations that depend only on the innocent decisions are lost in backing up to the last culprit. So retracting a branch to an earlier decision may require losing lots of useful deductions.

By contrast, in real problems the consequences of decisions are usually local and limited. For example, when solving a crossword puzzle we often get stuck—we are unable to fill in any blanks that we are sure of. But we can make progress by assuming that some box contains a particular letter, without very good evidence for that assumption. Positing that the box contains that letter allows deductions to follow, but eventually it may be found that the assumption was incorrect and must be retracted. However, many of the deductions made since the assumption are correct, because they did not depend on that assumption. We do not retract those correct deductions just to eliminate the consequences of the wrong assumption. We want the actual consequences of wrong assumptions to be retracted, leaving consequences of other

assumptions believed. This is rather hard to arrange in an expression-oriented language system.

With propagators we have escaped the control structure based on evaluation of expressions, at the cost of thinking of the propagators as independent machines running in parallel. Because a propagator cell may contain a value set whose elements are layered values, we can associate a support set with each value. In the propagator system a value is believed only when all of the premises in its support set are believed; and only believed values are propagated. In this way we have the ability to switch world views by modulating the belief status of each premise independently.

Some combinations of premises are contradictory. A contradiction is discovered when the system tries to merge two incompatible fully supported values, thus deriving a contradiction object. The contradiction object has a support set with those premises that imply the contradiction.

To make this work we introduce an amb-like choice propagator, which makes assumptions about the value of a cell that it controls. Each assumption is supported by a hypothetical premise created by the choice propagator that it may assert or retract. The propagator network computes the consequences of alternative assignments of the values of the assumptions made by the choice propagators in the network until a consistent assignment is found.

An example: Pythagorean triples

Consider the problem of finding the Pythagorean triples for natural numbers up to ten. (We considered a similar problem on page 272. Here we are setting up an even dumber algorithm!). We can formulate this as a propagator problem:

(define (pythagorean)

(let ((possibilities '(1 2 3 4 5 6 7 8 9 10)))

(let-cells (x y z x2 y2 z2)

(p:amb x possibilities)

(p:amb y possibilities)

(p:amb z possibilities)

(p:* x x x2)

(p:* y y y2)

(p:* z z z2)

(p:+ x2 y2 z2)

(list x y z))))

This code constructs a propagator network with three multiplier propagators and an adder propagator that will be satisfied if the values in cells x, y, and z are a Pythagorean triple. Each of these cells is connected to a choice propagator, created by p:amb, that will choose an element from possibilities.

To run this we must first initialize the propagator system:

(initialize-scheduler)

We can now build the propagator network and extract all of the triples from it. The procedure pythagorean constructs the propagator network and returns a list of the three cells of interest. The procedure run turns on the scheduler, thus running the network. While running, the choice propagators propose values of x, y, and z until either an unresolvable contradiction is found or the network becomes quiescent. If no contradiction is found, run returns done, and the base values of the strongest values of each of the interesting cells are printed. Then that combination of values is rejected, and the loop is continued with a new call to run.

(let ((answers (pythagorean)))

(let try-again ((result (run)))

(if (eq? result 'done)

(begin

(pp (map (lambda (cell)

(get-base-value

(cell-strongest cell)))

answers))

(force-failure! answers)

(try-again (run)))

result)))

(3 4 5)

(4 3 5)

(6 8 10)

(8 6 10)

(contradiction #[cell x])

7.5.1 Dependency-directed backtracking

Dependency-directed backtracking is a powerful technique that optimizes a backtracking search by avoiding asserting a set of premises that support any previously discovered contradiction. 13 The dependency-directed backtracking strategy we use is based on the concept of a nogood set—a set of premises that cannot all be believed at the same time, because their conjunction has been found to support a contradiction. When a cell contains two or more contradictory values, the union of the support sets of those values is a nogood set.

When a contradiction is detected, the nogood set for that contradiction is saved to let the backtracker know not to try that combination again. To make it easy for the backtracking mechanism, the nogood set is not stored directly: it is distributed to each premise in the nogood set. Each premise gets a copy of the set with itself removed. For example, if the nogood set is {A B C ... }, then the premise A gets the set {B C ... }, the premise B gets the set {A C ... }, and so on. For any given premise, the list of all the partial nogood sets that have been accumulated from contradictions that the premise has participated in can be obtained with the premisenogoods accessor.

Once the nogood set is saved, the backtracker chooses a hypothetical premise from the nogood set (if any) and retracts it. The retraction activates the propagators that are neighbors of cells with values previously supported by that hypothetical, including the propagator that originally asserted that hypothetical, causing that propagator to assert a different hypothetical, if possible. If there are no hypothetical premises in the nogood set, the backtracker has no options, so it returns a failure.

Of course there is a lot of bookkeeping that needs to be done to make this work. Let's understand how that can be implemented.

Hypotheticals are made and controlled by binary-amb

The simplest choice propagator is constructed by binary-amb. The result of calling binary-amb on a cell is a binary-amb propagator with the cell as both an input and an output. A binary-amb propagator modulates the value of the cell to be either true or false, until a consistent assignment is found.

The procedure binary-amb introduces two new premises, which are marked as hypothetical premises. A hypothetical premise is one whose belief is allowed to be automatically varied as needed.

The binary-amb procedure initializes the cell with a contradiction: the procedure make-hypotheticals creates both a true value and a false value, each supported by a new hypothetical premise, and adds both values to the content of the cell. Adding these values activates the cell, calling its test-content! procedure, which starts the contradiction-handling mechanism, which ultimately alerts the binary-amb propagator of the unhappy cell. The contradiction will then be fixed by the binary-amb propagator's activate! procedure amb-choose:

(define (binary-amb cell)

(let ((premises (make-hypotheticals cell '(#t #f))))

(let ((true-premise (car premises))

(false-premise (cadr premises)))

(define (amb-choose)

(let ((reasons-against-true

(filter all-premises-in?

(premise-nogoods true-premise)))

(reasons-against-false

(filter all-premises-in?

(premise-nogoods false-premise))))

(cond ((null? reasons-against-true)

(mark-premise-in! true-premise)

(mark-premise-out! false-premise))

((null? reasons-against-false)

(mark-premise-out! true-premise)

(mark-premise-in! false-premise))

(else

(mark-premise-out! true-premise)

(mark-premise-out! false-premise)

(process-contradictions

(pairwise-union reasons-against-true

reasons-against-false)

cell)))))

(let ((me (propagator (list cell) (list cell)

amb-choose 'binary-amb)))

(set! all-amb-propagators

(cons me all-amb-propagators))

me))))

The amb-choose procedure uses the premise nogoods to determine whether the premise supporting the true value or the premise supporting the false value may be believed. Each element of the premise-nogoods of a premise is a set of premises such that if they are all believed, the premise cannot be believed. So if ambchoose finds any fully supported premise nogood for a premise, that premise cannot be believed.

If the premise supporting the true value or the premise supporting the false value is believable, amb-choose asserts true or false respectively. If neither is believable, it defers to higher-level contradiction processing (process-contradictions) in the hope that after the beliefs in other premises are modulated, it may be possible to assert true or false when this propagator is reactivated. The argument given to process-contradictions, constructed by pairwise-union, is a set of nogoods. Each of these nogoods is the union of a set of premises that rule out the choice of true and a set of premises that rule out a choice of false. Thus, any one of these nogoods would prevent the choice of either alternative. 14

(define (pairwise-union nogoods1 nogoods2)

(append-map (lambda (nogood1)

(map (lambda (nogood2)

(support-set-union nogood1 nogood2))

nogoods2))

nogoods1))

Learning from contradictions

The procedure process-contradictions saves all of the nogoods it received, distributing the information in the nogoods to the premise nogoods of the premises. It then chooses a nogood to disbelieve by retracting one of its hypothetical premises, if there are any.

(define (process-contradictions nogoods complaining-cell)

(update-failure-count!)

(for-each save-nogood! nogoods)

(let-values (((to-disbelieve nogood)

(choose-premise-to-disbelieve nogoods)))

(maybe-kick-out to-disbelieve nogood complaining-cell)))

The procedure save-nogood! augments the premise-nogoods of each premise in the given nogood set with the set of other premises it is incompatible with. This is how the system learns from its past failures. The premise being updated is not included in its own premise nogood sets, because a premise may not be incompatible with itself.

(define (save-nogood! nogood)

(for-each (lambda (premise)

(set-premise-nogoods! premise

(adjoin-support-with-subsumption

(support-set-remove nogood premise)

(premise-nogoods premise))))

(support-set-elements nogood)))

The new premise nogood may either subsume or be subsumed by one of the existing premise nogoods; minimal premise nogoods are most useful.

Resolving the contradiction

A contradiction is resolved by retracting one of the premises in the nogood set that supports the contradiction. The only premises that can be retracted are the hypotheticals, which are asserted "for the sake of argument." If there is more than one nogood set supporting a contradiction, we choose one with the smallest number of hypotheticals, because disbelieving a small nogood set rules out more possiblities than disbelieving a nogood set with a larger number of hypotheticals.

(define (choose-premise-to-disbelieve nogoods)

(choose-first-hypothetical

(car (sort-by nogoods

(lambda (nogood)

(count hypothetical?

(support-set-elements nogood)))))))

However, the choice of which hypothetical from the selected nogood set to reject is not apparent. Here we arbitrarily choose the first hypothetical premise available in the nogood set.

(define (choose-first-hypothetical nogood)

(let ((hyps (support-set-filter hypothetical? nogood)))

(values (and (not (support-set-empty? hyps))

(car (support-set-elements hyps)))

nogood)))

The procedure maybe-kick-out finishes the job of resolving the contradiction. If the chooser was able to find a suitable hypothesis to disbelieve, then that hypothesis is retracted and propagation continues normally. Otherwise, the propagation process is stopped and the user is informed about the contradiction.

(define (maybe-kick-out to-disbelieve nogood cell)

(if to-disbelieve

(mark-premise-out! to-disbelieve)

(abort-process (list 'contradiction cell))))

Contradictions discovered in a cell

If in the process of adding content to a cell a contradiction is discovered, the unhappy cell calls handle-cell-contradiction with itself as the argument. At that moment the strongest value in the cell is the contradiction object, and the support of the contradiction object is the irritating nogood set. This can be handed off to process-contradictions to deal with.

(define (handle-cell-contradiction cell)

(let ((nogood (support-layer-value (cell-strongest cell))))

(process-contradictions (list nogood) cell)))

This is all that needs to be done to support dependency-directed backtracking.

Non-binary amb

Although binary-amb can be used in the formulation of many problems, most choices are not binary. It is possible to construct an n-ary choice mechanism from binary-amb by building a circuit of conditional propagators controlled by cells whose true or false values are modulated by binary-amb propagators, but this is very inefficient and introduces lots of extra machinery. So we provide a native n-ary choice mechanism with p:amb. The procedure p:amb is analogous to binary-amb. For binary-amb there are exactly two choices, #t or #f, for the value in the cell, and each is supported by a hypothetical premise. When p:amb is applied to a cell and a list of possible values, the procedure make-hypotheticals adds those values to the cell, each supported by a new hypothetical premise.

When the propagator constructed by p:amb is activated, the procedure amb-choose is called. It first tries to find a hypothetical premise, among its hypotheticals, that is not ruled out by its premise-nogoods. If there is one, it marks that premise in and marks all of the other premises out, thus choosing the value associated with that premise as the value of the cell. If none of the hypothetical premises can be believed, it marks all of its premises out and makes a new set of nogoods to pass to processcontradictions, which will retract a hypothetical premise from one of those nogoods, if possible. The generalization of the procedure pairwise-union to take more than two setsis crossproduct-union. As before, this is a resolution step.

(define (p:amb cell values)

(let ((premises (make-hypotheticals cell values)))

(define (amb-choose)

(let ((to-choose

(find (lambda (premise)

(not (any all-premises-in?

(premise-nogoods premise))))

premises)))

(if to-choose

(for-each (lambda (premise)

(if (eq? premise to-choose)

(mark-premise-in! premise)

(mark-premise-out! premise)))

premises)

(let ((nogoods

(cross-product-union

(map (lambda (premise)

(filter all-premises-in?

(premise-nogoods premise)))

premises))))

(for-each mark-premise-out! premises)

(process-contradictions nogoods cell)))))

(let ((me (propagator (list cell) (list cell)

amb-choose 'amb)))

(set! all-amb-propagators

(cons me all-amb-propagators))

me)))

Choice propagators built with p:amb introduce only as many hypothetical premises as there are choices. Constructions for n > 2 choices based on binary-amb introduce about twice that many premises.

7.5.2 Solving combinatorial puzzles

To demonstrate the use of dependency-directed backtracking to solve combinatorial puzzles efficiently, consider the famous "multiple dwelling" puzzle:[29]

Baker, Cooper, Fletcher, Miller, and Smith live on different floors of an apartment house that has only five floors. Baker does not live on the top floor. Cooper does not live on the bottom floor. Fletcher does not live on either the top or the bottom floor. Miller lives on a higher floor than does Cooper. Smith does not live on a floor adjacent to Fletcher's. Fletcher does not live on a floor adjacent to Cooper's. Where does everyone live?

We can set this up as a propagator problem. Here is a very unsophisticated formulation of the problem:

(define (multiple-dwelling)

(let-cells (baker cooper fletcher miller smith)

(let ((floors '(1 2 3 4 5)))

(p:amb baker floors) (p:amb cooper floors)

(p:amb fletcher floors) (p:amb miller floors)

(p:amb smith floors)

(require-distinct

(list baker cooper fletcher miller smith))

(let-cells ((b=5 #f) (c=1 #f) (f=5 #f)

(f=1 #f) (m>c #t) (sf #f)

(fc #f) (one 1) (five 5)

s-f as-f f-c af-c)

(p:= five baker b=5) ;Baker is not on 5.

(p:= one cooper c=1) ;Cooper is not on 1.

(p:= five fletcher f=5) ;Fletcher is not on 5.

(p:= one fletcher f=1) ;Fletcher is not on 1.

(p:> miller cooper m>c) ;Miller is above Cooper.

(c:+ fletcher s-f smith) ;Fletcher and Smith

(c:abs s-f as-f) ; are not on

(p:= one as-f sf) ; adjacent floors.

(c:+ cooper f-c fletcher) ;Cooper and Fletcher

(c:abs f-c af-c) ; are not on

(p:= one af-c fc) ; adjacent floors.

(list baker cooper fletcher miller smith)))))

This says that Baker, Cooper, Fletcher, Miller, and Smith all choose to live on one of the five floors, and their choices must be distinct. We then see the constraints on their choices represented as a propagator circuit. Some cells, such as b=5, are initialized to a boolean value. Thus, the line (p:= five baker b=5) represents the constraint that Baker does not live on the fifth floor. The constraint that Cooper and Fletcher do not live on adjacent floors is implemented by the assignment of fc to #f and the last three constraints.

To use the propagator system we need to define all the primitive propagators, with the appropriate layering of the data:

(define (setup-propagator-system arithmetic)

(define layered-arith

(extend-arithmetic layered-extender arithmetic))

(install-arithmetic! layered-arith)

(install-core-propagators! merge-value-sets

layered-arith

layered-propagator-projector))

This rather complicated setup procedure gives the information required to build and install the propagators with an arithmetic, layered with premises that can be tracked and reasons that are available for debugging. The default setup, when the propagator system is loaded, is for numerical data:

(setup-propagator-system numeric-arithmetic)

We are now in a position to run our puzzle example:

(initialize-scheduler)

(define answers (multiple-dwelling))

(run)

(map (lambda (cell)

(get-base-value (cell-strongest cell)))

answers)

;Value: (3 2 4 5 1)

*number-of-calls-to-fail*

;Value: 106

We see the (correct) result: the floor on which each protagonist lives. We also see that it takes roughly 100 failed assignments to find a correct assignment. 15 It turns out that this assignment is unique: there are no other assignments consistent with the constraints given.

Notice that the total number of unconstrained assignments is 5 5 = 3125, but we are solving this with only about 100 trials. We are able to do this because the system learns from its mistakes: For each failure it accumulates information about which sets of premises cannot be simultaneously believed. Correctly using this information prevents the investigation of paths that are hopeless given the results of previous experiments.

Exercise 7.5: Yacht name puzzle

Formulate and solve the following puzzle using propagators. 16 Mary Ann Moore's father has a yacht and so has each of his four friends: Colonel Downing, Mr. Hall, Sir Barnacle Hood, and Dr. Parker. Each of the five also has one daughter and each has named his yacht after a daughter of one of the others. Sir Barnacle's yacht is the Gabrielle, Mr. Moore owns the Lorna; Mr. Hall the Rosalind. The Melissa, owned by Colonel Downing, is named after Sir Barnacle's daughter. Gabrielle's father owns the yacht that is named after Dr. Parker's daughter. Who is Lorna's father?

Exercise 7.6: Multiple-dwelling puzzle

It is easy to formulate the multiple-dwelling problem for the amb evaluator of chapter 5.4. In fact it is easier than for the propagator system, because we can think and write in terms of expressions. Indeed, you will be able to write constraints like the fact that Fletcher and Cooper do not live on adjacent floors as something like:

(require (not (= (abs (- fletcher cooper)) 1)))

rather than

(c:+ cooper f-c fletcher)

(c:abs f-c af-c)

(p:= one af-c fc)

where cells like f-c, af-c, and fc must be declared and one and fc are initialized. This is because the propagation system is a general wiring-diagram system rather than an expression system.

- a. Formulate and solve the multiple-dwelling problem using the amb evaluator of section 5.4. Instrument the system to determine the number of failures. How many failures does it take?

- b. Write a small compiler that converts constraints written as expressions into propagator diagram fragments. You will find

that this is very easy. We made a first stab at this in exercise 7.1 on page 340. But here we really want to make a translator for code from section 5.4 to a propagator target. Demonstrate that your compilation gets the correct answer.

c. How many failures are needed to solve the problem with the propagator diagram that you compiled into? If it takes more than about 200 failures you compiled into very bad code!

Exercise 7.7: Card game puzzle revisited

Redo exercise 5.17 using propagators.

Exercise 7.8: Type inference

In section 4.4.2 we built a type-inference engine as an example of the application of unification matching. In this exercise (which is really a substantial project) we implement type inference taking advantage of propagation.

- a. Given a Scheme program, construct a propagation network with a cell for every locus that is useful to type. Each such cell will be the repository of the type information that will be accumulated about the type information at that locus in the program. Construct propagators that connect the cells and impose the type constraints implied by the program structure. Use unification match as the cell-merge operation. The unification may yield a contradiction if the program cannot be typed.

- b. There may be some cells of a program where a type is not sufficiently constrained by the types of the neighboring cells. However, propagation can be stimulated by dropping a general type variable into such a cell and allowing that variable to

accumulate constraints by propagation. This is called "plunking." Try it.

- c. In hard cases a type inference may require making guesses (using hypotheticals) and backtracking on discovery of contradictions. Show cases where this is necessary.

- d. Tracking of premises and reasons enables the construction of informative error comments, but to do this you must associate each program locus with its cell so that things that are learned by propagation can be related to the program being annotated. You may use any kind of "sticky note" you like to associate the locus bidirectionally with the cell. In any case, try to make good explanations about why a particular locus has the type that was determined, or why a program could not be consistently typed.

- e. Is this implementation of type inference practical? Why or why not? If not, how can it be improved?

A moral of this story

Solving combinatorial puzzles is fun, but it is not the real value of what we have done. Indeed, "SAT solvers" are important for solving real-world problems of this kind. But there is a deeper message here for the design of computational systems. By generalizing our programming from expression structures to wiring diagrams (which can be inconvenient—but that can be mitigated with compiling) we have made it possible to smoothly integrate nondeterministic choice into programs in a natural and efficient way. We can introduce hypotheticals, which provide alternative values supported by propositions that may be discarded without pain. This gives us the freedom to treat things like quadratic equations correctly. They really have two solutions, and any computation based on a choice of one solution may decide to reject it, while the other solution may lead, after a long computation, to an acceptable outcome. For example, given that p:sqrt computes the traditional positive square root of a real number, we can build a directional propagator

constructor p:honest-sqrt, with input cell x∧2 and output cell x, that gives its users a (hidden) choice of square roots:

(define-p:prop (p:honest-sqrt (x∧2) (x))

(let-cells (mul +x)

(p:amb mul '(-1 +1))

(p:sqrt x∧2 +x)

(p:* mul +x x)))

What is important here is that such choices may be introduced without arranging that the enclosing machinery knows how to handle the ambiguity. For example, the constraint propagator that relates numbers to their squares can just use p:honest-sqrt:

(define-c:prop (c:square x x∧2)

(p:square x x∧2)

(p:honest-sqrt x∧2 x)))

7.6 Propagation enables degeneracy

In the design of any significant system there are many implementation plans proposed for every component at every level of detail. However, in the system that is finally delivered this diversity of plans is lost, and usually only one unified plan is adopted and implemented. As in an ecological system, the loss of diversity in the traditional engineering process has serious consequences.

We rarely build degeneracy into programs, partly because it is expensive and partly because we traditionally have supplied no formal mechanisms for mediating its use. But the propagation idea provides a natural mechanism to incorporate degeneracy. The use of partial information structures in cells (introduced by Radul and Sussman [99]) allows multiple, perhaps overlapping, sources of information to be merged. We illustrated this with intervals in the stellar distance example in section 7.1. But there are many ways to merge partial information: partially specified symbolic expressions can be merged with unification, as shown in section 4.4.2. So the